The business blog

Why we don’t pay attention in virtual events – and how AI can help change that

It’s no secret that the average attention span is getting shorter. When even household-name streaming services like Netflix struggle to keep viewers, things only look bleaker for corporate video content that is, admittedly, wildly less entertaining than a true crime drama.

But can the latest advances in machine learning and AI help organization leaders turn the tide? Luckily, there are quite a few innovative technologies out there that can help make virtual events more engaging and enjoyable to all.

Before the virtual event

Schedule virtual events for when people are most likely to tune in and pay attention

By “pay attention,”

we don’t just mean clicking the “Join” button. After more than two years of distributed work, we all know that simply having a video event run in the background does not necessarily mean we are fully taking in any of the information.

So even if common sense dictates that we don’t hold large live broadcasts first thing Monday morning or Fridays after 3 pm, things get a little more complicated once you rule those out.

And it gets even trickier if you’re tasked with scheduling across different continents and time zones.

You might be familiar with common practices in your own office – like a daily team stand-up at 9 am, or a semi-official coffee break that happens every Wednesday in the HQ cafeteria, so you’ll know not to pick those hours. But it’s safe to assume that your colleagues across global company outposts, from Hong Kong to Argentina, have certain rituals of their own. And even if you knew exactly what they were, the headache of accounting for all possible clashes is simply too much to deal with every time you need to schedule an internal communications event.

How to use AI to find the best broadcasting time slots

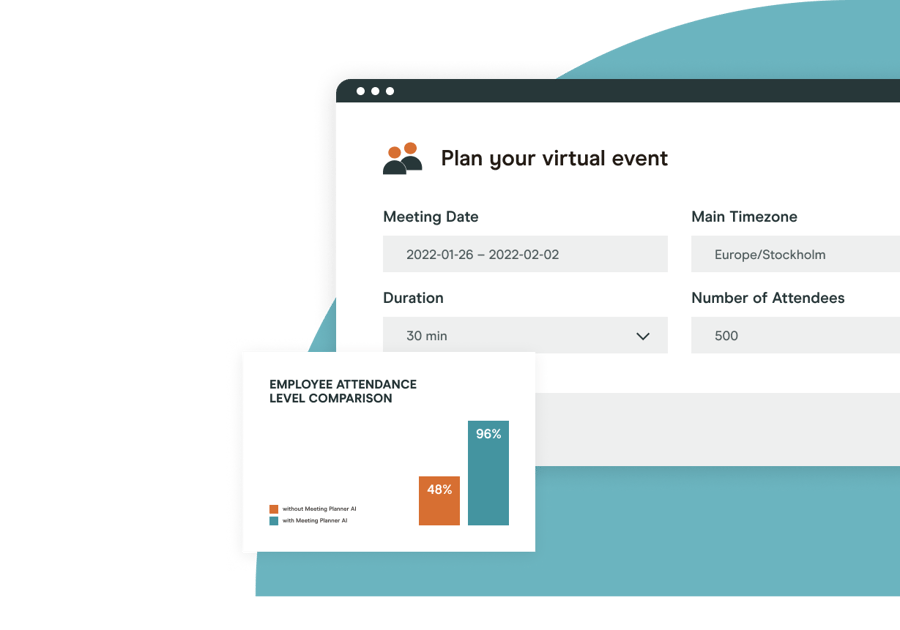

Modern technology has made it possible to easily find a time that will suit the highest number of people, accounting for their locations and time zones. Our own Video Analytics suite recently expanded to include an additional feature that analyzes large amounts of data from past virtual event performance to make intelligent scheduling predictions.

With this tool, you can simply select a time frame of up to 7 days and indicate which time zones you’re inviting people from to get concrete time and date recommendations for when to hold your next live video broadcast.

Each time slot recommendation comes with an estimate of how many of your invitees will actually join the event live, how high their engagement with the content will be (presented as an estimated event engagement score), and what quality of video experience users will be able to enjoy.

Let’s zero in on this last point in particular.

Make sure viewers don’t drop off because of video buffering

According to research results published in the International Journal of Human-Computer Studies, even a very slight delay in service connectivity can affect user perceptions, potentially leading others to perceive slow responses as inattentiveness.

In another study published by the journal Computers in Human Behavior, people reported feeling more frustrated during video chats when there was just a one-second (!) delay.

“Even a very slight delay in video connectivity can lead others to perceive slow responses as inattentiveness.”

– International Journal of Human-Computer Studies

In a live video broadcast context, there is simply no way to double-check that all viewers are experiencing low latency and following the speaker in real time. Unless, of course, you are using dedicated analytics tools to monitor the live stream and troubleshoot issues as soon as they arise.

How to use AI to ensure a high quality of video experience

Of course, an ounce of prevention is worth a pound of cure – and nowhere does this ring more true than in the context of live video. The only way to make sure your video meeting runs smoothly is to identify and resolve any potential issues ahead of time (for example, by testing the stream before going live).

However, that’s not the only thing you could be doing.

The good news is that it is now possible to not only predict how many people will join every broadcast and what the overall engagement might look like but to also forecast the average Quality of Experience (QoE) viewers of your event will enjoy depending on the broadcast time slot you select.

So while there are definitely things you can do to redeem the situation once the event is already live and something goes wrong, the best way to ensure a stable broadcast and keep people on till the very end is to plan for a time when issues are the least likely to occur.

One way this can be done is with the latest feature introduced to the Hive Video Analytics suite. It can be used to not only predict attendance and viewer engagement but also the Quality of Experience people will benefit from when joining at a specific time.

Invest in better video and audio equipment

If a broadcast is airing live, there is often no chance to ask the proverbial “Can you hear me?” before starting to speak. Modern AI technology makes it possible to separate the speaker’s voice from background noise in real-time, which can work for both incoming and outgoing audio.

While ensuring a high quality of both video and audio is absolutely essential for large broadcasts, even smaller team meetings can improve significantly if participants can clearly hear each other thanks to a quality microphone that removes any background noise in real-time.

In this case, investing in better hardware can be seen as an inclusion initiative, since it benefits workers who may, for example, be hard of hearing but hesitate to interrupt a meeting by asking the speaker to repeat themselves.

During the virtual event

Speech recognition and transcription

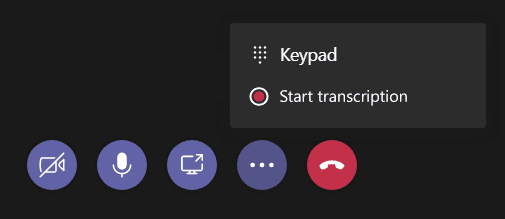

Another way that AI can improve the quality of any video meeting is through speech recognition. With this new functionality now built into popular corporate communication apps like Microsoft Teams, it’s easier than ever to generate an automatic transcript of any meeting with just a click of a button.

Machine learning algorithms can generate meeting transcripts in real-time, simultaneously with the meeting being recorded, and make it much easier to re-visit the contents of each meeting after the fact, without the need to endlessly scroll through the recording. This also frees up the participants to really pay attention to the speaker, rather than try to multitask and take notes.

Live captioning

Another (related) AI capability is live captioning, a feature that is currently available for several video communication platforms, such as Zoom. One company that specializes in exactly this type of speech-to-text transcription is Otter.

While transcription that can be revisited later is a great feature in itself, live captioning makes it even easier to follow the presenter for people who may not be native speakers of English or be hard of hearing.

Real-time translation

If you’re ready to commit to truly making your messaging equally accessible to all, one fantastic AI capability to explore is real-time translation. It works in conjunction with the closed captioning feature, and converts caption texts into the viewer’s native language.

As of the time of writing (April 2022), this feature is only widely available for text captions, but there has been extensive research and experimentation with real-time audio interpretation as well (which will hopefully make its way into Digital Workplace managers’ essential software stacks in the near future).

After the virtual event

Advanced user analytics

User analytics technology has made great strides forward in the past few years, making it possible to get data insights on everything from broadcast stream health to audience sentiment.

As discussed earlier in this article, AI can be used to schedule the best-performing time slots already during the early event planning stages. But it’s no less important to understand historic trends in your event engagement to draw conclusions about the type of content that best resonates with your audience, and how it can be improved to make the following events score even higher on engagement.

-1%20(1).jpg?width=100&name=MicrosoftTeams-image%20(3)-1%20(1).jpg)